- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Atomicgamer games 8565 granado espada online t3fun files

- Dilwale songs mp3 download 2015

- Msi nvidia 970 graphics card serial number

- Marvell 91xx config device driver

- Pop up blocker firefox extension

- Sap 2000 havuzu

- Kaspersky internet security download 2019

- Free disk cleaner for mac freeware

- I love you jesus anita wilson lyrics

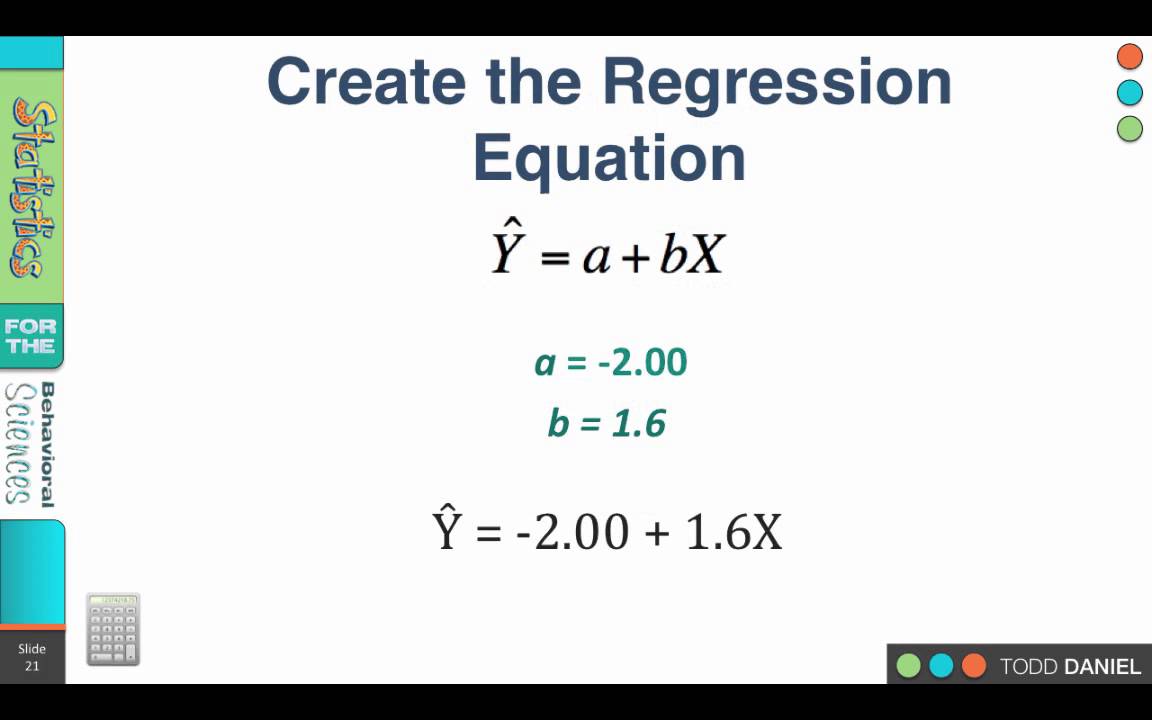

- Basic algebra linear regression equations

- Filezilla ftp client setup

Note that this is exactly how Python implements scalar multiplication with a vector. More specifically, if \(\alpha\) is a scalar and \(v\) is a vector, then \(u = \alpha v\) is defined as \(u_i = \alpha v_i\). Scalar multiplication is defined as the product of each element of the vector by the scalar. As we shown before, sets are usually denoted by braces \)). In mathematics, a set is a collection of objects. We discussed the data structure - sets in chapter 2 before, here we will take a look of it from mathematics point of view using the mathematical languages. For example, a statistician might want to relate the weights of individuals to their heights using a linear regression model. Introduction to Machine LearningĪppendix A. Linear regression models have long been used by people as statisticians, computer scientists, etc. There are also certain non-linear functions that can modify with algebra to mimic the. Ordinary Differential Equation - Boundary Value ProblemsĬhapter 25. If (x, z, y) is one of the data elements, you use (log(x), log(z), log(y)) as a data element for the linear equation regression model. The basic format of a linear regression equation is as follows. Predictor-Corrector and Runge Kutta MethodsĬhapter 23. Ordinary Differential Equation - Initial Value Problems Numerical Differentiation Problem Statementįinite Difference Approximating DerivativesĪpproximating of Higher Order DerivativesĬhapter 22. Least Square Regression for Nonlinear Functions

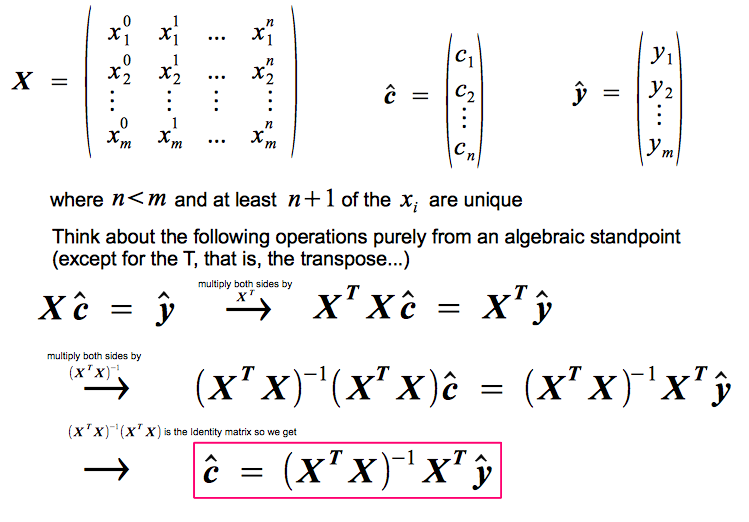

Least Squares Regression Derivation (Multivariable Calculus) That is, viewing y y y as a linear function of x, x, x, the method finds the linear function L L L which minimizes the sum of the squares of the errors in the. Least Squares Regression Derivation (Linear Algebra) Least Squares Regression Problem Statement Solve Systems of Linear Equations in PythonĮigenvalues and Eigenvectors Problem Statement Linear Algebra and Systems of Linear Equations Errors, Good Programming Practices, and DebuggingĬhapter 14.

Inheritance, Encapsulation and PolymorphismĬhapter 10. Variables and Basic Data StructuresĬhapter 7. 90THE ALGEBRA OF LINEAR REGRESSION AND PARTIAL CORRELATION approach, we nd thatS y,yS y,bxbS yxRecalling thatS yxr yxS yS x, andbr yxS y/S x, one immediately arrives at S y,yr 2 yx S 2 y S 2 (5.8) Calculation of the covariance between the predicted scores and error scores proceeds in much the same way. Python Programming And Numerical Methods: A Guide For Engineers And ScientistsĬhapter 2.